No-Code Scraper

Video Guide

What is No-code Scraper?

No-code Scraper lets you scrape websites using pre-made templates or a point-and-click browser extension. The tool requires no programming knowledge to use. It can return structured data in JSON or CSV, run scraping jobs periodically, and send results directly to your email or platforms like Zapier.

How to use No-code Scraper

With No-code Scraper, you can scrape in two ways:

- Using templates.

- Using the No-code Scraper browser extension.

Templates

Templates are pre-made web scrapers. They run in the cloud and have our proxy network built in. You can use templates to extract data from particular websites by simply entering a few parameters.

For example, here’s what it takes to retrieve the Google search results page for the query cat:

- Enter cat into the query field.

- Select a country.

- Select the domain (such as .com)

The No-code Scraper browser extension

The Chrome browser extension lets you build web scrapers for any website by clicking on the website’s elements. It can:

- Select all related elements, such as headlines or prices, with one click.

- Dynamically organize and preview your selection in Excel-like columns.

- Name the columns.

The extension uses your web browser to load pages, so it’s able to handle all website elements, including dynamically generated content.

You can run the extension on your computer to instantly download data in JSON or CSV. Or, you can select elements and let us deliver the data to you by creating a collection. This enables scheduling and other features.

More on No-code Scraper Extension.

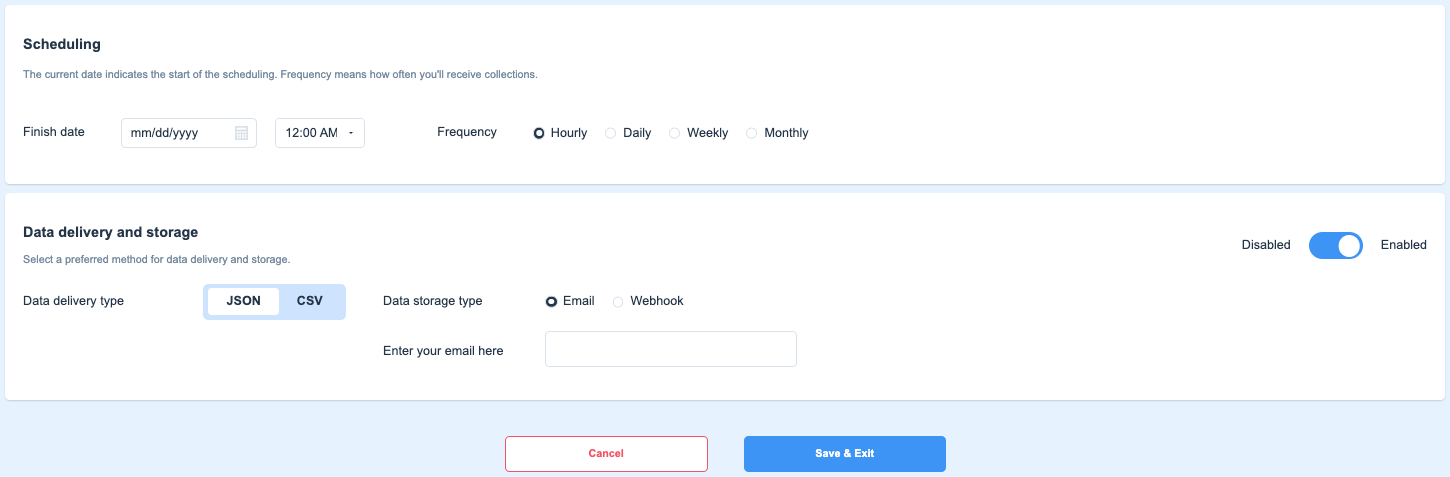

Schedule times & export options

No-code Scraper allows you to select different options for each of your scraping tasks. You may schedule these tasks for every hour, day, week, or month to get recurring results of the data you select with No-code Scraper extension.

You can completely disable the export option by clicking on the switcher in the right corner of No-code Scraper and setting it to Disabled. This will hide the export options; however, you will still be able to download your collections from the main management window.

Schedule a scraping task in your Smartproxy dashboard

All scheduled tasks require No-code Scraper subscription

If you don't remember your No-code Scraper password, you can always reset it by clicking on Authentication method in the sidebar menu under the No-code Scraper tab and changing the password there.

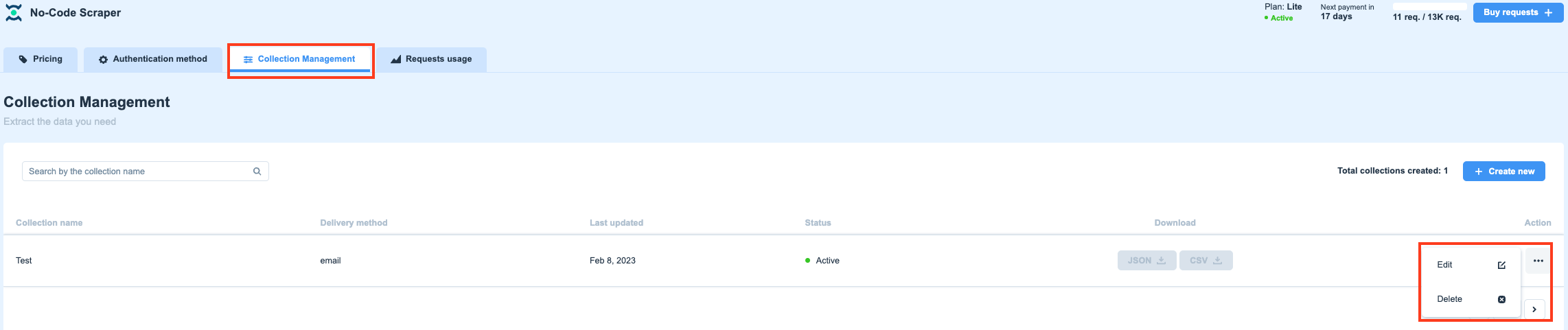

The main No-Code Scraper window

In the main window of No-Code Scraper, you can download your collections in JSON or CSV formats (even if you have disabled the export option when creating the collection). You can also see the status of your job and the date when it was last executed.

If you want to delete a particular job, simply click on the three dots and confirm that you want to delete it.

The Collection Management section of No-Code Scraper

Creating a collection from a template

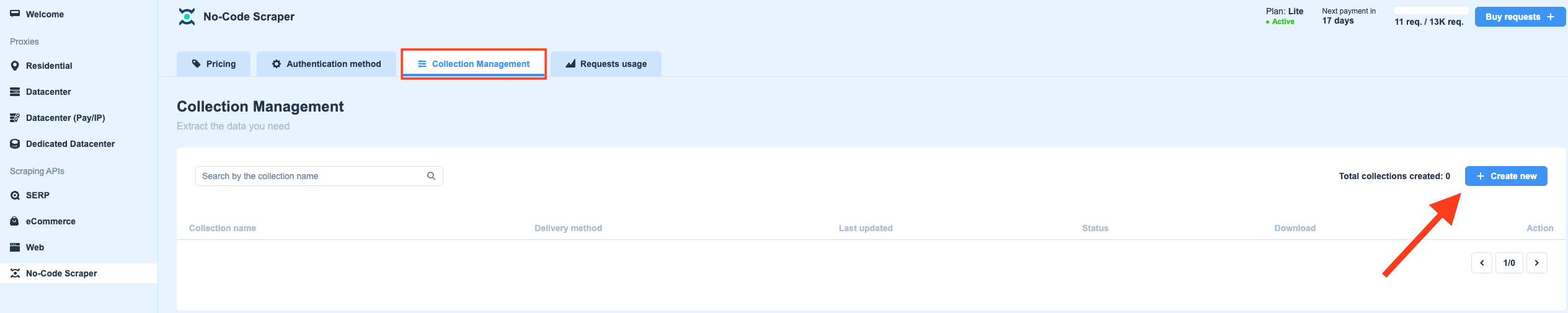

You can choose to create a collection straight from the dashboard by clicking on the Create new button.

Create a new collection in your Smartproxy dashboard

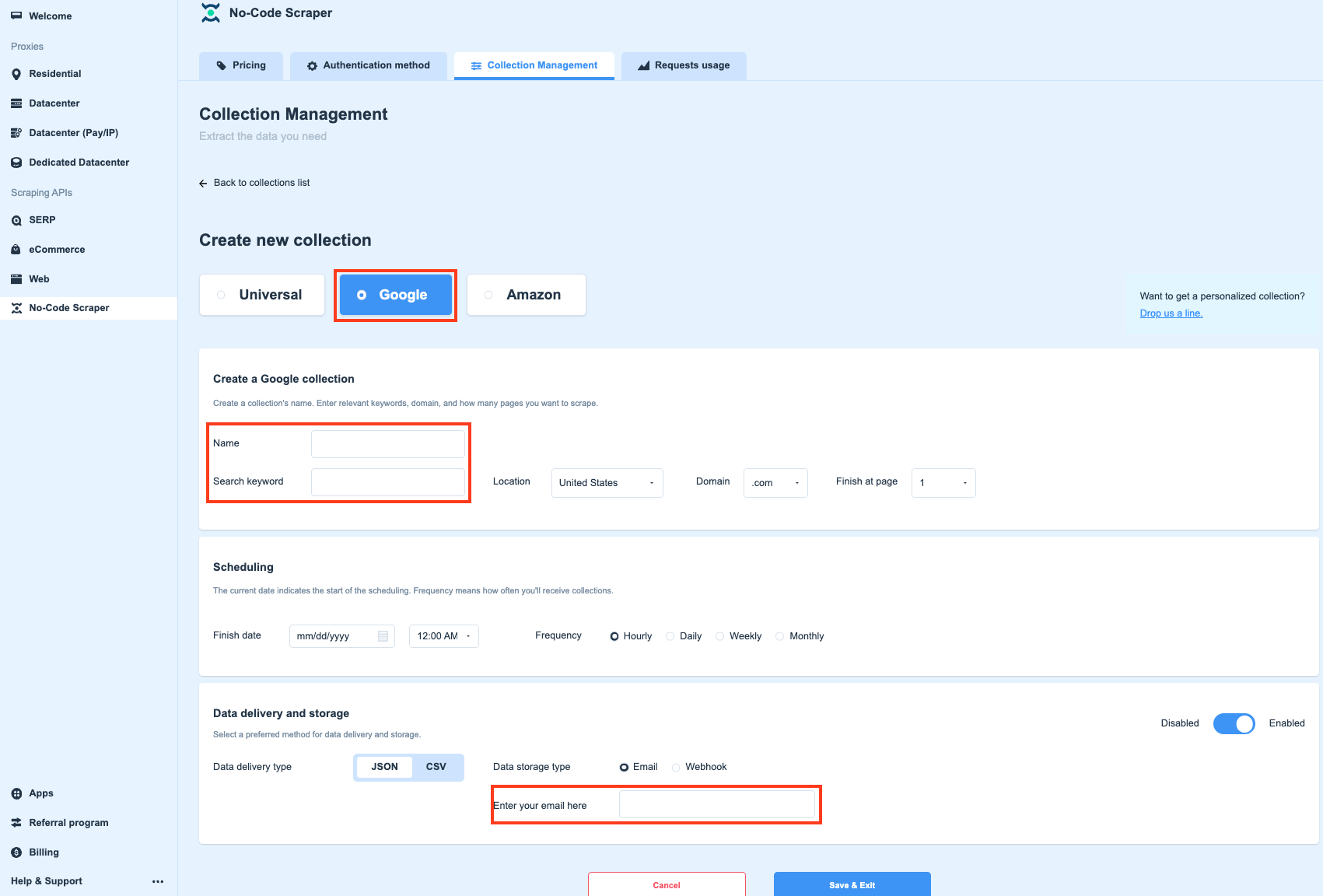

Creating a Google collection from a template

You can choose from either the Google or the Amazon template. The only difference is that the Google template allows you to choose a Location.

You only need to type in the name for your collection, the query you will be sending to Google, choose which location you wish to send your request from and to which domain.

The rest of the configuration is the same — simply select the frequency and finish date for your scheduled requests, then choose your delivery and storage types (if you choose to keep this option enabled).

Create a new collection using the Google template

Common errors

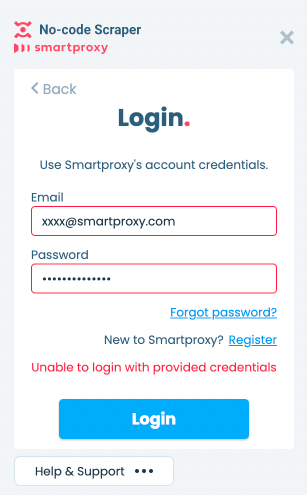

If you are having issues logging in to No-code Scraper extension, please check that:

- You are using your email, and not your username;

- The password for your account is correct;

- You have an active No-code Scraper subscription.

To login, make sure that you're using your email, the password is correct, and your subscription is active.

Updated 17 days ago